From PoCs to real results: Scaling GenAI with measurable impact

Unlike conventional systems, GenAI solutions are probabilistic, highly sensitive to input variation, and often tied to external providers. These complexities raise important questions:

- How do you scale GenAI responsibly?

- How do you ensure it delivers measurable business value?

We believe GenAI isn’t about just building new sets of tools — it’s a strategic capability. And like any capability, it requires structure, alignment, and operational rigor to deliver on its promise.

In collaboration with our partners at Pay-i, here are five key considerations to help organizations mature toward long-term value and scalability across their AI programs:

1. Start with business outcomes, not just model performance

The success of any GenAI initiative starts and ends with business value. Before thinking about prompts, models, or architecture, ask:

What problem are we solving, and how will we measure impact?

Whether it’s increasing call center efficiency, accelerating product development, or enhancing customer experiences, each use case should tie to clear, trackable KPIs — like time to resolution, conversion rates, or CSAT improvements.

This alignment doesn’t simply justify investment — it shapes design decisions, informs evaluation criteria, and ensures teams stay focused on outcomes, not just outputs.

2. Plan for operational complexity — especially latency and throughput

Traditional systems typically optimize for end-to-end latency. With GenAI, it’s more nuanced:

- Time to first token (TTFT): How quickly the model begins responding

- Inter-token latency: The speed at which tokens are streamed to the user

- Time to first completion token: Especially critical for reasoning tasks where internal computation delays output

- Output tokens per second (OTPS): A function of prompt complexity and model context window

Each of these has a direct impact on user experience — and often, business value. Slower response times can reduce adoption, frustrate users, or break workflows.

Throughput is equally critical. Models are subject to rate limits — requests per minute, tokens per minute — which affect scalability. Larger models often support fewer concurrent users.

Tip: Build with performance monitoring from day one. Track these latency metrics separately to pinpoint friction points.

3. Design for resilience in a volatile ecosystem

GenAI systems depend on third-party providers — many of which are evolving rapidly themselves. Availability can fluctuate. Models can change behavior with version updates. Even prompt reusability across models isn’t guaranteed.

Subtle shifts — like a tweak to a model’s temperature setting — can change the tone or accuracy of responses. Entire use cases can be disrupted overnight if resilience isn’t designed in.

A resilient architecture accounts for:

- Provider outages or rate limiting

- Model version drift

- Failover strategies (e.g., fallback models or internal hosting)

- Prompt and model abstraction layers for flexibility

Tip: Build prompts modularly and use tooling to detect shifts in model behavior over time.

4. Make evaluation a continuous process

Measuring GenAI output is fundamentally different from measuring traditional system behavior. You’re not evaluating if the system worked — you’re evaluating how well it worked.

Popular methods like “LLMs evaluating LLMs” are easy to scale but often unreliable. Human feedback is still essential, especially for complex or customer-facing use cases.

What complicates this further is criteria drift — the natural evolution of what “good” looks like over time. As business goals, user expectations, or compliance standards shift, evaluation frameworks need to adapt.

What to implement:

- Human-in-the-loop scoring for subjectivity

- Structured evaluation datasets

- Ongoing review of what “success” means for each use case

Tip: Assign business owners to co-own evaluation criteria alongside technical leads. This keeps alignment tight.

5. Maintain visibility into cost — and value

Cost in GenAI is fluid. It’s not just a question of usage — it’s a function of:

- Model pricing structures (which is often more complex than tokens in, tokens out)

- Prompt length and output verbosity

- Provider billing specifics or licensing

- Usage spikes from production deployments

Forecasting cost is difficult. Explaining it to business stakeholders is harder. To optimize business value, organizations should evaluate the cost to deliver each GenAI use case against the measurable KPI improvements it enables. Without transparency, teams can’t optimize — and trust erodes.

Tip: Track cost per use case, not just per model. When possible, correlate cost with value (e.g., cost per resolved case, cost per qualified lead).

The path forward

Successfully managing GenAI isn’t just about prompts or model selection — it’s about building the right systems to drive value at scale. Through our work with customers, we’ve helped organizations move beyond pilot projects to operational maturity, navigating the complexities of scale, governance, and ROI.

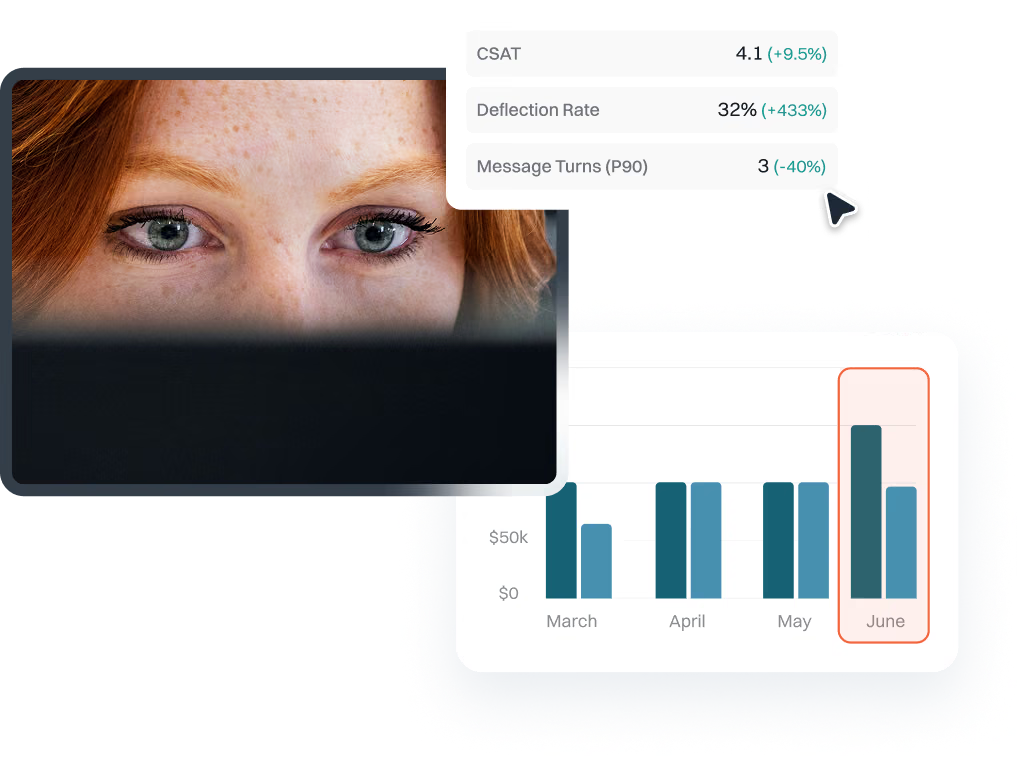

A key part of that journey is visibility. We’ve consistently seen that tracking usage, performance, and cost across teams is a major hurdle. That’s why we partner with platforms like Pay-i, which helps organizations manage entire GenAI use cases from a single dashboard. With Pay-i, clients can monitor KPIs, align spend to business outcomes, and quantify the value generated — all essential for scaling GenAI responsibly and sustainably.

By focusing on transparency, performance metrics, and cross-provider management, businesses can unlock GenAI’s full potential while staying in control of complexity and cost.